Most organisations are in a hybrid-like situation regarding the use of cloud services. And serious ones, the larger ones, especially the strictly regulated ones, are paying extra attention when cloud services are used. Most of that comes from the perspective that cloud is a ‘public’ service, which means that there are perceived higher risks involved when using them. And of course, in one way or another using cloud computing is a form of outsourcing. So, companies may have ‘cloud policies’, ‘cloud competence centers’ and specific ‘cloud risk assessments’ for instance. All of this begins of course with some sort of definition. For what do these policies, centers, and assessments hold, and for what not? Enter the definition of Cloud Computing by the US National Institute of Standards and Technology (NIST) from 2011 — ancient from the perspective of cloud developments, but often still in use — which defines concepts such as public and private cloud, Infrastructure as a Service (IAAS) or Platform as a Service (PAAS), etc.

TL;DR

The NIST definition of Cloud Computing from 2011 has now become so much an oversimplification that it is more often than not unhelpful, e.g. when trying to base your policies on it. Cloud or not-cloud was important when we had no clue what we were dealing with and while it was maturing, but that phase has passed. So, forget about ‘IAAS’ and ‘PAAS’, end your ‘cloud policies’ or cloud-specific procedures. Instead, concentrate on managing the key generic issue underlying it: the ever more complex mixes of owned and outsourced algorithms and data, regardless of oversimplistic ‘cloud’ concepts.

NIST’s 2011 definition is thankfully short (about one and a half page long) and says that something is cloud computing if it has the following characteristics:

- On-demand self-service. You can unilaterally provision what is needed, without interaction with the service provider..

- Broad network access. It is widely available through standard mechanisms from a variety of platforms (e.g. mobile, desktops).

- Resource pooling. The providers physical resources are pooled dynamically between you and other consumers, and inside the data center there is no control of the location of your computing resources in the provider’s datacenter (but you may be able to choose region, datacenter, etc.).

- Rapid Elasticity. Capabilities an be rapidly provisioned and released based on actual demand.

- Measured service. The virtual resources used are measured, typically leading to pay-per-use.

These are called essential characteristics by NIST, which suggest that you need all of them to fall under the NIST definition, a suggestion supported by this part of a footnote: “A cloud infrastructure is the collection of hardware and software that enables the five essential characteristics of cloud computing.”

Cloud computing — according to NIST — is delivered in a number of Service Models:

- Infrastructure as a Service (IAAS). You just get fundamental computing resources, like processing power (e.g. virtual machine), networking, storage. The rest is up to you, including the operating system. You do not control the underlying hardware in any way.

- Platform as a Service (PAAS). You get a platform on which you can deploy your own application(s). You have only limited control over the platform (settings). Like IAAS, you do not control the underlying hardware in any way. But that is extended to the operating system and any platform that is (invisibly) needed to support the platform you actually use. (Don’t forget: it’s platforms all the way down.)

- Software as a Service (SAAS). It is the provider, not you, who deploys, You just use. You may have limited configuration options.

And, finally, it comes in the following Deployment Models:

- Private cloud. The cloud infrastructure is exclusively for you.

- Community cloud. The cloud infrastructure is dedicated for a community of users, say all the municipalities in a country. This is basically public, but with a limited set of users that are allowed to use and possibly some sort of common ideas about what the cloud must deliver (e.g. security-wise). Nobody talks about this much anymore.

- Public Cloud. Everybody can use it.

- Hybrid Cloud. You are using multiple deployments concurrently (private, community, public), but these are bound together in some sort of abstraction so you can do things like load balancing between clouds.

So, what is wrong with it? A number of things, but you might want to read this post below first as it presents a more realistic depiction of what it is you get and do when using IAAS, PAAS and SAAS:

The many lies about reducing complexity part 2: Cloud

That standard image that explains the difference between on-premises, IAAS, PAAS, and SAAS in the cloud? You know what, it is hugely misleading. Here is the real deal.

Keep readingSo, back to NIST. What is wrong with it? Here are a few issues:

- When you’ve read the post above, it should be clear that NIST’s definition of PAAS lacks the reality that it is always also partly IAAS. Pure PAAS does not exist. If you use a PAAS, say a web application server from Azure, you still have to manage networking, storage, firewalls, and a whole lot of other aspects.

- NIST’s definition of ‘private cloud’ is outdated. We have ‘on-premises private cloud’ these days, where we use the cloud pattern in our own data center — we call that DevOps Ready Data Center (DRDC). What NIST calls a ‘private cloud’, might these days better be called a ‘virtual private cloud’ (again see the post above).

- NIST’s definition of ‘hybrid cloud’ (using two different ‘external’ clouds, which data and application portability) is both out of date and unrealistic. It is out of date because hybrid these days generally is seen as a mixture of cloud and on-premises and unrealistic because true portability between clouds (say Azure and AWS) is nonexistent and will remain so, as their differences underpin their key competitive advantage;

- The essential characteristic resource pooling is in contradiction with the deployment model private cloud. Potentially, the ‘unilateral’ aspect of self-service might in some cases of (virtual) private cloud also be less than absolute;

- Rapid elasticity is also not something you get by definition. If I create an IAAS (say, a server) in the public cloud it doesn’t automatically scale, neither vertically (it gets bigger) not horizontally (we get more of them). Some SAAS and PAAS services have rapid elasticity, others do not, and if they do not, they’re still SAAS or PAAS. Also there are often — initially hidden — hard limits (e.g. not more than x role bindings per subscription in Azure) on that elasticity which you run into as soon as you start using it in a really professional setting (such as a regulated entity with strict security, risk & control requirements);

- Broad network access, defined by NIST as accessible by desktops, mobile, is not by definition necessary. If I put my data lake into the cloud, I can connect it only privately. As also explained in the post mentioned above ‘private cloud’ has nothing to do with ‘private links’ (but confusion via language is rife). If I do not make use of broad network access, it still is cloud. Of course, this one could be considered: it must have it, you don’t need to use it. But suppose some data lake company only offers you a setup where any client doesn’t even get that ‘broad’ option as NIST describes it, should we still call it cloud? Certainly. Broad network access for cloud management — the self-service aspect — may be an essential, but probably only for public cloud.

Is it important to be precise about all of this? Technologically: no, we just do what we need to do to get stuff running responsibly. But legally, often: yes. For instance, organisations may have rules and regulations regarding ‘cloud use’. Or they have stakeholders, from the government to large clients that, from their perspective of being in-control, have cloud-specific requirements and policies. And then it matters what you mean when you say ‘cloud’.

The variation in different type of ‘cloud’ services these days often turns a NIST-like definition into an oversimplification. Following a famous maxim, often erroneously attributed to Albert Einstein, and generally presented without the key second part:

In every field of inquiry, it is true that all things should be made as simple as possible – but no simpler. (And for every problem that is muddled by over-complexity, a dozen are muddled by over-simplifying).

Sydney Harris, Chicago Daily News, Jan. 2, 1964

we should probably conclude that the NIST definition of cloud has now become so much an oversimplification, that it is more often than not unhelpful. Offerings are often a mixture of Service and even Deployment models. A cloud policy that proudly states “we will only do PAAS and not IAAS” is by definition impossible because there simply is no pure PAAS offering in the public cloud. Period.

Confusion is rife too. An architect I know recently got something like the following question asked by a higher management person: “Our Azure Infra Domain 3, is that on-premises?” Apparently, someone was arguing that deploying some application on an Azure PAAS meant that that application was a cloud application and it should fall under a ‘cloud policy’, and someone else, equally confused, countered that since they deployed the application themselves on a PAAS that was under their own control the application was their own (Azure) infrastructure, so it was ‘on-premises’, and thus it did not fall under the cloud policy.

Probably a far better aspect to base your policies on is not so much a specific technological stack, let alone a definition of such a stack from over 10 years ago when it was still in its early infancy.

Aside: the meaning of on-premises is likely to change in the coming years. It used to be ‘our own bricks and mortar, physical security, power and cooling’. It has become ‘our own electronics, even if it resides in some co-hosting data center’. It may become ‘whatever infrastructure we control ourselves, regardless who controls the electronics’ . The phrase seems to be moving upwards in the stack every time people discover that they actually haven’t outsourced what they thought they were outsourcing. Uncle Ludwig was right.

Which brings us to a potential cleaner way to look at it.

The Key Concept is Outsourcing

When you use the cloud, the key concept is actually outsourcing, not if it is self-service etc.. When you get something from an external provider, be it IAAS, PAAS, SAAS, you get something that is owned by someone else and that you use. What all ‘cloud’ offerings have in common is that — in all combinations of service models — the one thing you always outsource is the actual hardware. The shortest definition of ‘cloud’ thus might be ‘someone else’s data center‘. It is the cloud provider that plugs in cables, replaces worn out components, decides on hardware choices, takes care of hardware monitoring, etc..

There is a simple definition of outsourcing that can help. It starts with a definition of ownership, one could say an ‘agile’ definition of ownership:

You own what you can change.

Me. Now.

Outsourcing follows from this:

Every part of what you use that you cannot change has effectively been outsourced.

Me. Now.

This ‘change-based’ definition of outsourcing is slightly broader than customary. For instance, it includes systems that you license but run on your own infrastructure, be it cloud or not. If you license a system to calculate something for you and you cannot change the algorithm that it uses to calculate, you have effectively outsourced that algorithm to your vendor. You do not factually know what it does under the hood, nor its primary function nor its other essentials such as how secure it is internally. On the other hand, when you use open source code, you can change it and you are — from the perspective of outsourcing — ‘owner’. You’re just not the exclusive owner. By the way: maybe we should call the licensing of systems “Executable as a Service (EAAS)”, but I digress, as usual (and it is silly nonsense).

But for the rest it is pretty easy. For instance, a spy agency outfit like the NSA or CIA in the US will generally have policies against outsourcing almost anything. Their data is so sensitive that they even want the bricks and mortar under their own control. Their cloud will always be private. Others, like banks, may at this point say that they want to control the hardware as well, but this may change and some new banks may already be fully public-cloud (or virtual-private-cloud) based. An insurance company may be OK with outsourcing to AWS or Azure. Most large organisations will still have some sort of cloud/on-premises mix for the foreseeable future.

As the article mentioned above states:

Suppose you open up some of your cloud-based systems to access from the public internet? You can do that. And suppose you shouldn’t have, because these systems contain sensitive data? And suppose this data is stolen in a very public breach? Who is to blame? Microsoft for providing you with enough rope to hang yourself, or you? It is clear that if this is a big news story, the heading will not be “Microsoft was lax with its security and management”, but “Company X was lax with its security and management in the cloud“.

The many lies about reducing complexity part 2: Cloud

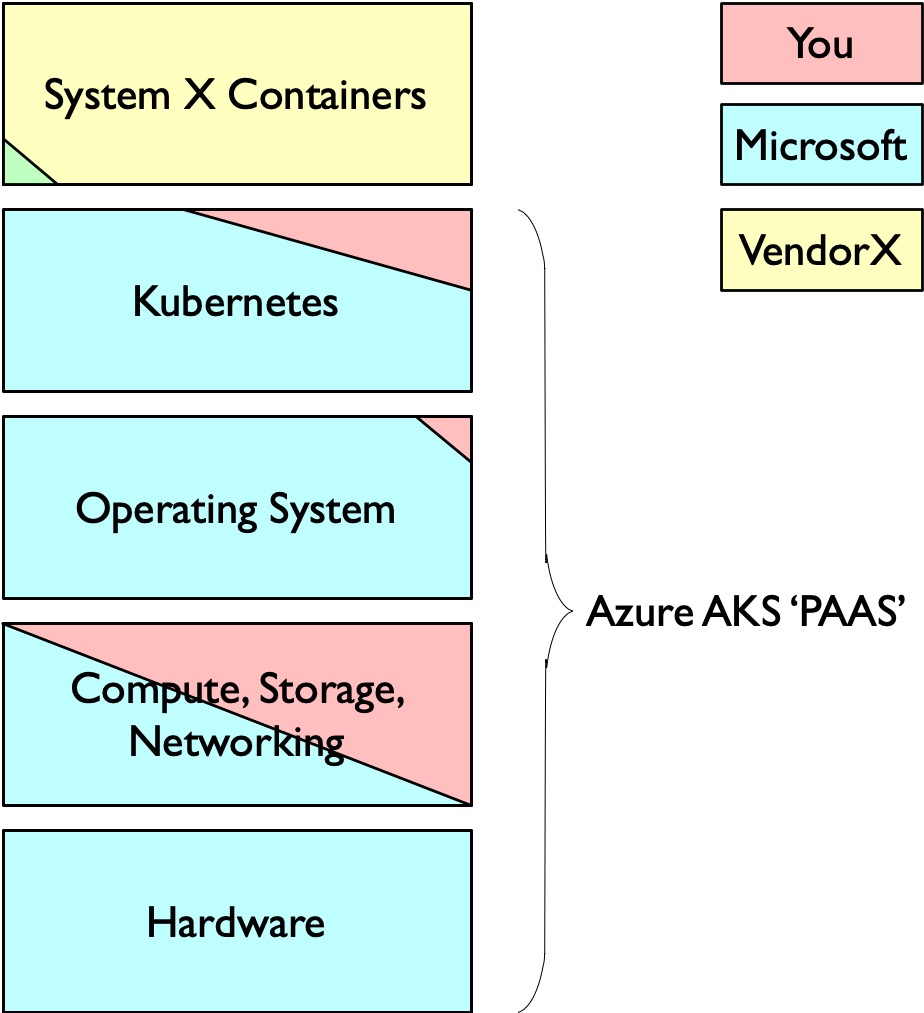

Suppose your firm CompanyA uses the Microsoft Azure’s AKS (Azure Kubernetes Services) ‘PAAS’ to run a system it has licensed from VendorX (so you install the ‘containers’ from VendorX in Azure’s AKS, just as you used to install some licensed software on your on-premises servers).

A picture might help here:

The yellow stuff is behaviour you have effectively outsourced to VendorX, the only thing you do is deploy it. There is a small ‘but’ here, because you have some influence. For instance, if VendorX releases a new version, you may opt to not install it and keep using the older version. Such ‘lazyness’ becomes less and less an option, as vendors more and more opt for forcing their customers to update via support agreements, but technically it remains an option. Still, it is not perfect ‘outsourcing’ according to the definition above.

The reddish areas illustrate your ‘ownership’, in this case you own the configuration (data) of it all (and if you use Configuration as Code, you own the algorithm that produces the configuration). You own what you can change. Potentially this affects even a bit of the Operating System (who knows what Microsoft has to change in the OS because of some option they give you).

In short

Forget about ‘cloud’, it is not a discriminating enough concept. End your ‘cloud policies’ or cloud-specific procedures. Instead, concentrate on managing the key issue underlying it: the ever more complex outsourcing of algorithms and data, some of which happens by using ‘cloud’. It’s fine to have, for instance, an ‘AWS competence center’ (so not a ‘cloud competence center’), just as you may have an Oracle team. Or a specialist team for any technology platform.

Featured Image by Hannah Wernecke on Unsplash

PS. Oh, and that little green triangle in the diagram above? Well, suppose you get a container from VendorX, will you blindly deploy that in your AKS on Azure? Or do you want to process it in your vulnerability scanning setup first? Then that check is something you do yourself, but as you do not change the container (just pass/block), you still do not ‘own’ what is inside. But what now if you use some sort of vulnerability scanning service that is part of Azure? In that case, you have outsourced your container vulnerability scanning algorithm and data to Microsoft. There is no end in sight with respect to the complexity of what we are building in the IT world.

“Such is life. And it gets sucher every day.” (Peter de Smet — De Generaal)

Truth be told, this article serves to recognize how remarkably good the 2011 (ancient cloud history) defiNISTion was…

However, I agree that NIST is a shamelessly corrupt organization that should be dissolved peacefully or at least indicted. It has no honor.

LikeLike