In March 2019, I published a story about Quantum Computing, or QM Computing, (To be and not to be — is that the answer?) addressing the hype about QM Computing, explaining how it really works, what it potentially might do if successful, and what the actual — not to be underestimated — bottlenecks are. The reason for writing the story was that I observed quite a bit of hype, some of it directed at unsuspecting parties in the area of ordinary business that were told that they should take QM Computing into account in their expectations for the coming years, maybe even already invest in it. So, I wrote to deflate that hot air balloon a bit.

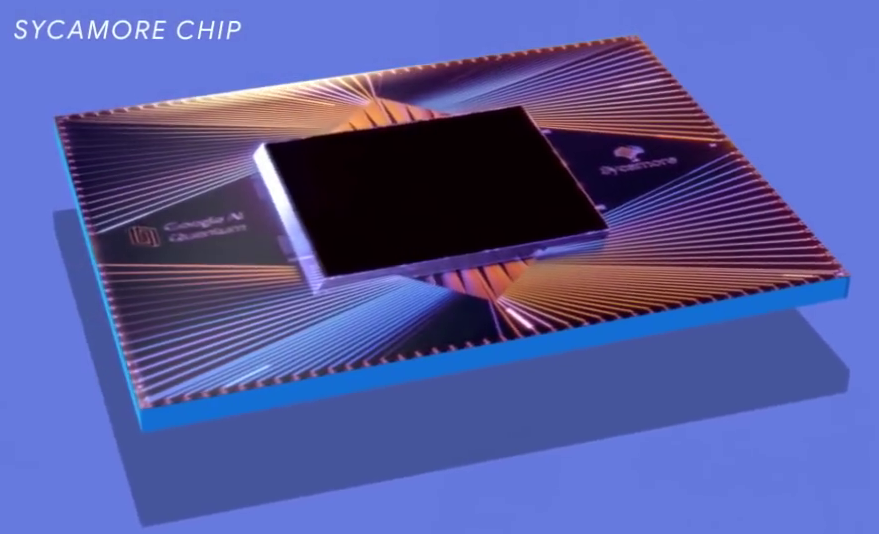

Half a year later, Google announced that its Sycamore QM Computer had passed the threshold for quantum supremacy, telling the world that it calculated in mere minutes something that would take a digital supercomputer 10,000 years. Food for a renewal of the high expectations at least, even if IBM quickly countered that a digital supercomputer actually could do the calculation in 2.5 days, not 10,000 years. Yes, Google had been overdoing the messaging a bit, but nonetheless we had a small QM Computer hugely outperforming a digital supercomputer. So, egg on my face and forget about that critical look at QM Computing, right?

To answer that question, I’d like to (finally, I already had this lying around for ages) follow up with a story trying to make clear to (reasonably) normal people what — if anything — has changed.

First, here is a short recap of that previous article (though I advise to read the original so you understand how QM Computing really works, this here below is really too condensed):

- QM Computing uses qbits instead of bits. Instead of holding a single, certain bit value (0 or 1) that can be read, a qbit holds a chance of producing either a 0 or 1 bit value when read. This can be seen as holding both 0 and 1 at the same time;

- By using multiple qbits, a QM Computer can calculate on all possible combinations of bit values in parallel. So, for instance, instead of using the 256 (the possible number of values for 8 bits) values one by one, in a 8-qbit QM computer we can work with them all at once;

- However, that sadly does not mean you have immediately that massive parallel speedup, because qbits produce a random value when being read, such is the ‘chance’ nature of quantum mechanics after all. So, your QM algorithm must work in such a way that at the end of the algorithm — through destructive and constructive interference — all qbits are in a state where they reliably produce an intended 0 or 1 (i.e. as a result of the algorithm, the chance of reading a 0 or a 1 must be 99.9999% or so). Otherwise, the QM Computer just produces random output and not the useful result of an actual calculation.

- There are three bottlenecks to get to a functioning QM Computer:

- It is very hard to build qbits; This is why the largest QM Computers have only around 50-100 of them (this is called the width of the computer and thus the algorithms that can run on it);

- Qbits are fundamentally uncertain when read, so come with high error rates. We need either lower error rates or many more qbits to get around the errors using ‘error correction’;

- QM Computers are unstable, it is very hard to run algorithms on them that need a long series of ‘steps’ (the number of steps is called the ‘depth’ of the algorithm). An algorithm with about 40 steps is just about the max these days;

- But the main problem is this: to actually make tactical use of a QM Computing, we need QM algorithms that produce those reliable outcomes, as mentioned above, and after decades of work we only have a very small set of algorithms (about 2 or 3) for a few very specific problems.

I mentioned in the previous post the article by John Preskill, in which he actually coined the term ‘quantum supremacy’. Quantum supremacy is the point at which QM Computers will become able to do calculations that no classical computer can ever do in reasonable time. Google claimed in 2019 to have reached that point.

What Google actually did (in layman’s terms)

Google used a 54-bit QM Computer. Google generated random algorithms to run on that QM Computer (so, not useful algorithms, but something with random steps, as if you would create a calculation by randomly applying operations such as addition, subtraction, etc. on your input numbers). Each such an algorithm was run millions of times, with all qbits set to 0 initially. The output of running each specific random algorithm for millions of times is a set of millions of output values where some values have a higher chance of appearing and others less so. Earlier research had shown that it was possible to do a limited calculation on the resulting dataset of millions of produced numbers that show that the result is what you would expect if the QM Computer functions properly. Confused? You should be (unless you’re a specialist).

So here is an even simpler way to look at it: Google did a hardware test and found the machine functioned as it should. It has a machine with a low enough error rate and long enough stability that it can reliably run QM algorithms. These algorithms, remember, do not really exist. So it is not surprising that Google (correctly) ends the article with “We are only one creative algorithm away from valuable near-term applications”. True. Quoting the aforementioned inventor of the term ‘quantum supremacy’, John Preskill:

the team has verified that they understand their device and that it performs as it should. Now that we know the hardware is working, we can begin the search for more useful applications.

John Preskill, Why I Called It ‘Quantum Supremacy’

So this is nothing? Not at all. This is truly an impressive engineering result. Before this, we were still uncertain if it was at all possible to create such machines. But the crazies and enthusiasts who are not particularly bothered by either facts or knowledge will jump (have jumped) on it and argue that QM Computing is around the corner. Which is utter nonsense.

Takeaway

Let’s return to the question that John Preskill asked in 2012 and that was quoted in the previous post: “Is controlling large-scale quantum systems merely really, really hard, or is it ridiculously hard?” and follows up with “In the former case we might succeed in building large-scale quantum computers after a few decades of very hard work. In the latter case we might not succeed for centuries, if ever” [It. GW].

In other words, it seems from Google’s result that working large scale QM Computers is not ridiculously hard (a.k.a. more or less impossible), but only really, really hard. So, after a few decades of very hard work, we might have machines that can perform calculations no normal digital computer can.

If someone invents a useful algorithm that can run on it, that is.

[Update 14/Sep/2021. In the previous article I wrote: You have to admire the physicists for their capability of getting money for physics. What I was inferring there is that QM Computing could be very powerful as a sort of analog computing that mimics physical systems (such as molecules, or fluid dynamics, etc.). So, not as a generic speedup for digital IT problems, but as simulations of physical systems to solve physical problems. Such use might come already when we go somewhat beyond what the current state of the art, such as Google’s Sycamore, can deliver. “He who predicts the future lies, even if he is telling the truth” as the saying goes, but my guess is that the manufacturing and chemical/pharmaceutical world might be among the first businesses to profit from Quantum Computing first and I think it is not unlikely that we will see this within the coming ten years.]

In summary: does Google’s ‘Quantum Supremacy’ means QM Computing has arrived? Nope. So definitely watch out for the crazies (in Dutch: wappies) that convincingly tell you it is all real already, and paint either utopias or horror stories of super intelligent robots or broken encryptions. Such horror stories are fantasy. For real horror, you need not go beyond human ingenuity…

Afterword

- Quantum Computing and Quantum Internet are often thrown together in some sort of QM Computing revolution. They are completely different beasts. Quantum Internet (internet where a man-in-the-middle attack is impossible) is a technically feasible use of quantum technology. Don’t let someone sell QM Computing to you using QM Internet developments.

- There is — as I mentioned in the previous article — a useful algorithm that is always mentioned in discussions about QM Computing: Shor’s Algorithm. It is an algorithm to factor a number into its primes. Such an application could be useful to break public key encryption, which is widely used (e.g. on the web, that little lock you see in your browser). Real life public key encryption uses 2048-bit keys these days and it can easily grow. In a few decades, if QM Computing hardware gets at that scales with a stability to match, or a much larger scale so error correction might be attempted, such encryption might become weak.

There are one or two other potentially useful algorithms. The lack of QM algorithms is not because we haven’t been trying to find these. We’ve been looking for decades already (Shor’s was invented in 1994), illustrating how hard it is to even find a single useful algorithm for QM Computers. Given that Shor’s algorithm is one of the very few useful algorithms, it is interesting to note that it was able to factor 15 into 3×5 in 2001, 21 into 3×7 in 2012, and in 2019 (quite recently) IBM attempted to factor 35 into 5×7 and failed because of accumulating errors. Did I already mention QM Computing is hard? And should I mention a 2048-bit key is roughly a number with 650 decimal digits, not 2? Nâh. Or that Shor’s is not effective against symmetric encryption? So that using Quantum Internet to exchange keys for symmetric encryption and using symmetric encryption would already stop Shor in its tracks? Double nâh.

We should not make the opposite error of the crazies and suggest QM Computing is completely impossible for ever. But for the foreseeable future, it is safe to assume it is. - For a short remark on quantum annealing (which isn’t really QM Computing even if it is marketed as such), see the previous article.

Image by Google, CC BY 3.0, https://commons.wikimedia.org/w/index.php?curid=109155716

Previous article: To be and not to be — Is that the answer? (The fundamentals of QM computing)

Next article: Google’s ‘Willow’ quantum computer: impressive science and misleading marketing (Google’s next step ‘Willow’ and its error correction results)

PS. Ugh! The original publication had a nasty typo/grammar error in the title (still visible in the URL). And more sloppy errors. Shame on me.

4 comments